HR / Recruiting

Candidate Screening + Interview Kits

Summaries, scorecards, and interview prep that speed recruiters up without removing judgment.

Screening is repetitive.

Recruiters spend too much time restating the same profile, rebuilding the same interview kit, and coordinating the same handoff notes.

Use OpenClaw to structure the hiring workflow around evidence.

OpenClaw can summarize resumes, compare them to role criteria, draft interview questions, and prepare interviewer packets with a consistent rubric.

Why OpenClaw Setup fits this workflow

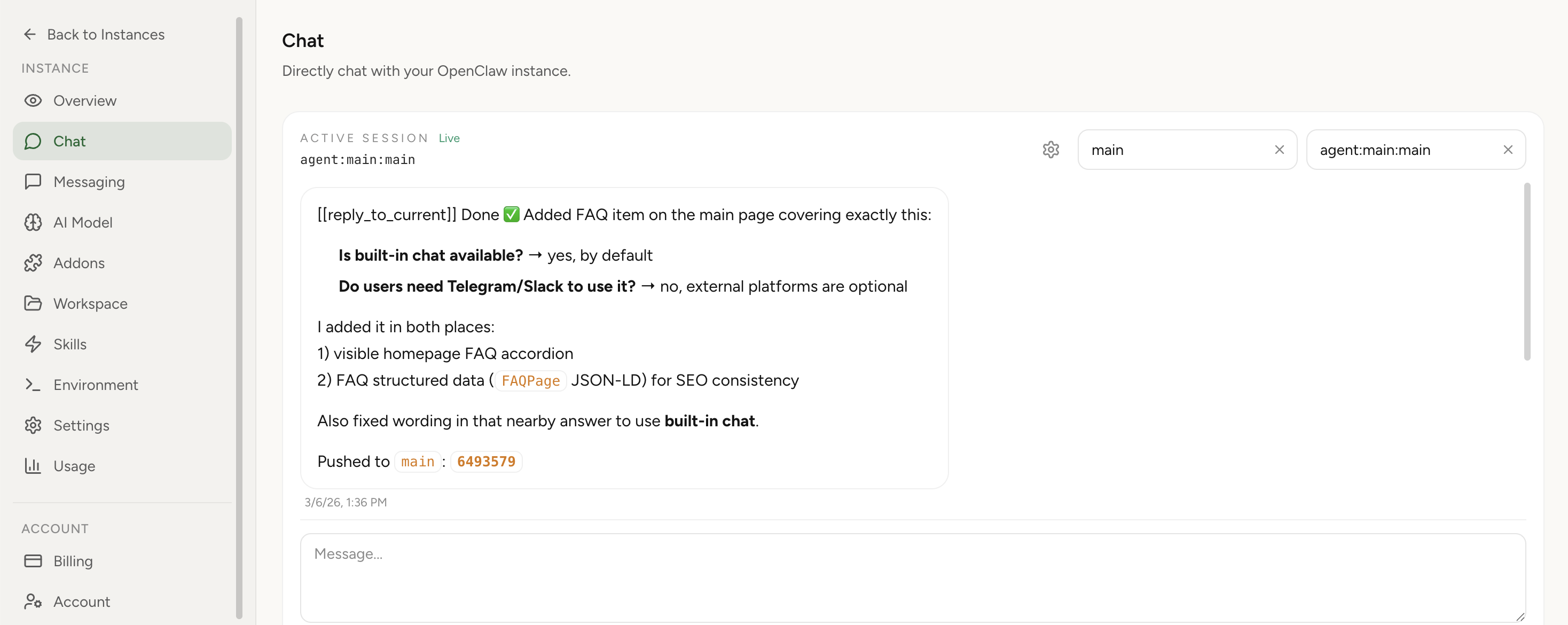

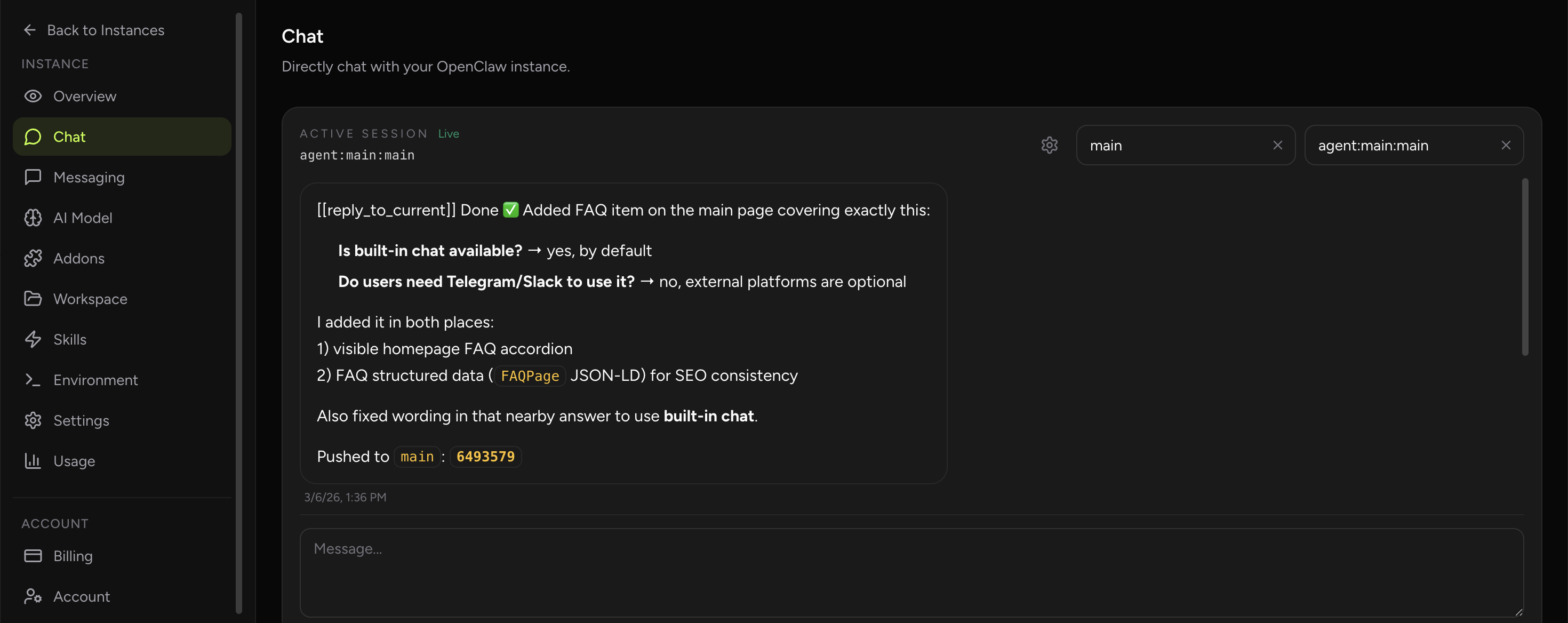

OpenClaw Setup fits recruiting workflows best when the product is positioned as a structured assistant environment rather than a generic resume summarizer. Recruiters can use Built-In Chat for candidate packets, keep rubrics and interview templates in the workspace, and run the same process repeatedly without a technical setup tax.

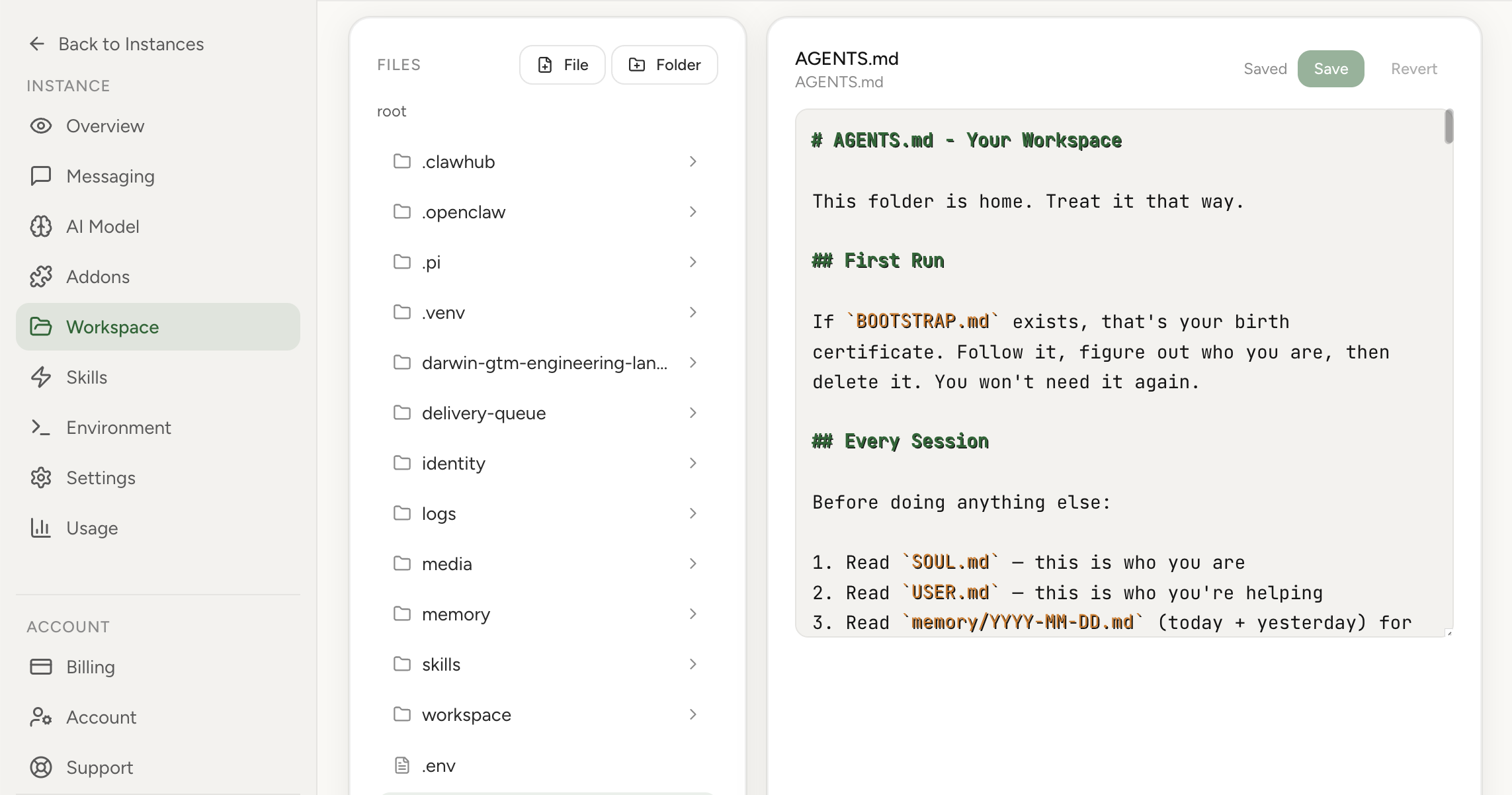

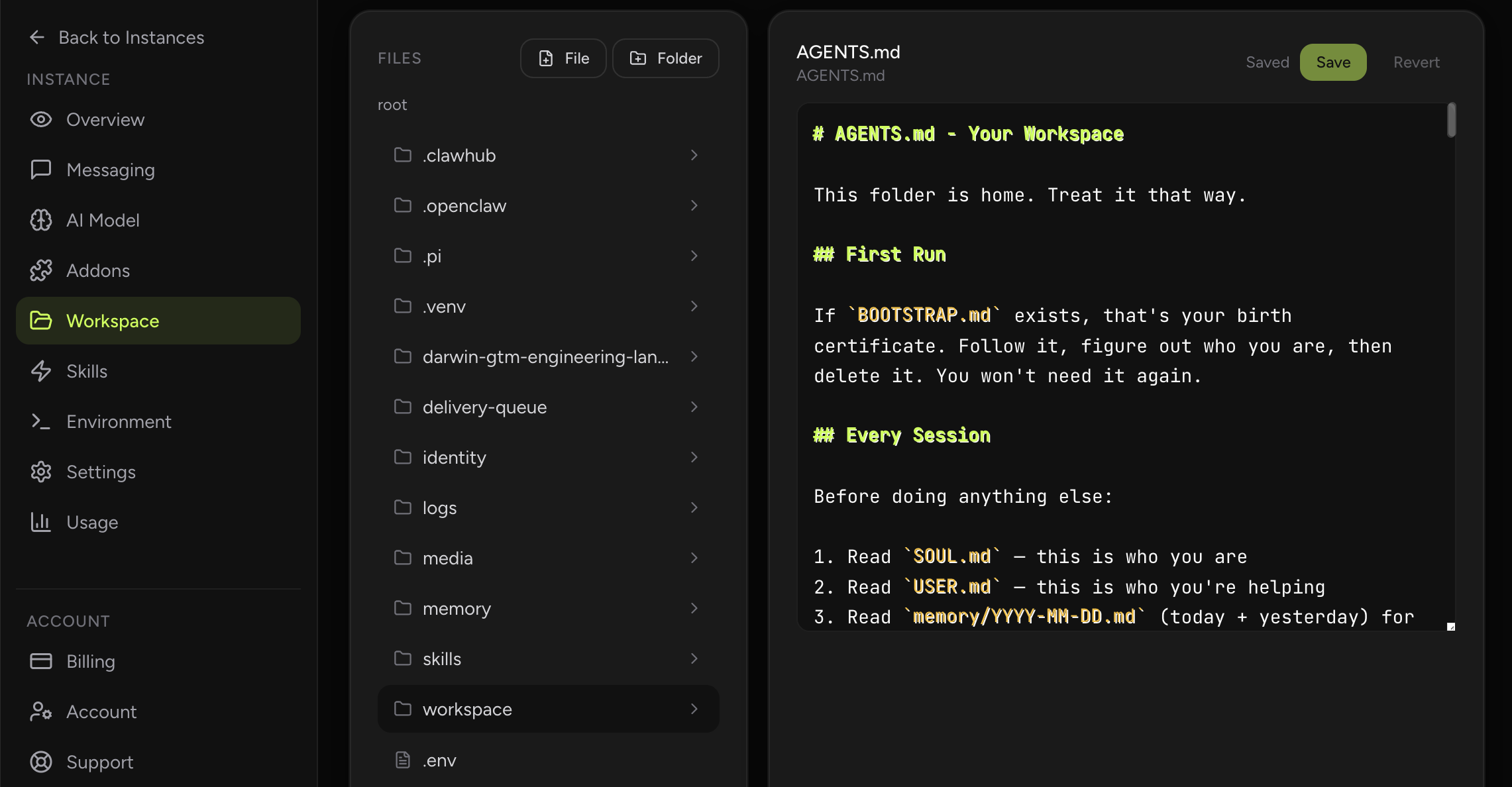

That matters because the hosted product lowers the barrier for a recruiting team to actually operationalize the assistant. The workflow can live in a dashboard with preserved instructions and review habits instead of in scattered prompts that vary by recruiter or disappear after one use.

- Use Built-In Chat for candidate summaries, interview-kit drafts, and feedback-template preparation.

- Store scorecards, role rubrics, and interviewer notes in the workspace so the assistant uses the team’s real hiring framework.

- Hosted continuity makes it easier to standardize the process across recruiters instead of relying on personal prompt history.

- Provider auth and dashboard access keep the workflow usable for non-technical team members without shell work.

Why this workflow matters

Hiring teams need more consistency long before they need more automation. A recruiter screening assistant is useful when it helps the team compare candidates against explicit criteria, prepares interviewers better, and shortens the admin burden that slows down candidate response times. LinkedIn’s Future of Recruiting research makes two points that matter here. First, recruiters using generative AI are reclaiming time from routine work. Second, the strategic focus is moving toward quality of hire and skills-based assessment. That means a useful assistant should help recruiters spend less time on formatting and more time on evidence, candidate experience, and hiring-manager alignment.

That is why candidate screening + interview kits is a meaningful OpenClaw use case. The managed-hosting angle matters because many teams want the workflow gains of an always-on assistant without turning a side project into another system they need to harden, patch, and babysit. In practice, the assistant becomes a persistent operator for the repetitive coordination layer around the work while humans keep the authority for the consequential calls.

Real-world signals and examples

The external evidence around this workflow is already visible in the market. LinkedIn Report: How AI Will Redefine Recruiting in 2025 and The Future of Recruiting 2025 | LinkedIn both point to the same pattern: teams are formalizing repetitive knowledge work into structured workflows that can be delegated, reviewed, and improved over time. That does not mean the role disappears. It means the role spends less time assembling context manually and more time on judgment.

LinkedIn reports that recruiters using generative AI cut workload, which creates room for the higher-touch parts of the job. LinkedIn also ties skills-based search behavior to better hiring outcomes, which is a strong argument for structured scorecards over unstructured impressions. The practical implication is that OpenClaw should prepare recruiters and interviewers, not make unilateral pass or fail decisions.

For a production team, that distinction matters. An OpenClaw workflow should be designed around repeatability, inspectability, and bounded scope. The assistant should gather evidence, produce a draft, or maintain a checklist faster than a human would, but the final decision point should still sit with the function owner. That is exactly what makes the workflow credible to skeptical operators.

How OpenClaw fits the workflow

The operational model is straightforward. First, OpenClaw connects to the small set of tools that already define the work: the inbox, dashboard, repository, report source, or web pages that this role checks repeatedly. Second, it runs a fixed prompt pattern on a schedule or on demand. Third, it returns structured output in a chat thread, summary note, or task-creation surface that the human already uses. Nothing about this requires a magical autonomous system. It requires disciplined workflow design.

The right prompt design for candidate screening + interview kits is evidence-first. Ask the assistant to separate observed facts from inference, missing information, and recommended next step. That single habit dramatically improves trust because the human can see what the model actually knows, what it suspects, and what still needs verification. In other words, the assistant behaves more like a good operator taking notes and less like a black box pretending to be certain.

OpenClaw is particularly well suited to this pattern because it can blend scheduled jobs, tool use, messaging, and human review into one thread. Instead of running a point solution for summarization and another tool for reminders and another for browser work, the team gets one place where the workflow can live end to end. That reduces coordination overhead, which is often the real tax on the role.

High-leverage automation patterns

The most useful automation patterns for candidate screening + interview kits are the ones that remove queue work and repeated context assembly. They give the role a cleaner first pass at the problem and make the human step more focused. In practice, that often means one or two scheduled routines, a handful of on-demand prompts, and a very explicit handoff point when ambiguity or risk rises.

- Resume digest: convert a long resume or LinkedIn profile into a structured summary aligned to the job’s required skills and signals.

- Interview-kit generation: create role-specific question sets, score rubrics, and debrief templates for each panelist.

- Hiring-manager sync: prepare a short brief that compares candidates on the same axes so decisions are less driven by who spoke last.

- Candidate communication support: draft next-step emails, rejection notes, and interview reminders in the team’s tone.

Rollout plan for a real team

A staff-level rollout starts smaller than most teams expect. You do not begin by automating the highest-stakes decision in the process. You begin by automating the most repetitive preparation step. Once the team trusts the assistant’s retrieval, formatting, and summarization quality, you expand to higher-leverage steps such as draft creation, queue management, or suggested next actions. That sequencing protects trust while still delivering value early.

The change-management side matters too. Someone should own the prompt, the review criteria, and the weekly feedback loop. The fastest way to kill adoption is to drop an assistant into the workflow and never tighten it again. The best teams treat the assistant like a process asset: they measure output quality, trim noisy steps, add missing context, and gradually turn a generic workflow into one that feels native to the team.

- Define the role rubric before you automate anything so the assistant has real criteria to follow.

- Use AI to prepare structured summaries, but keep candidate evaluation and final decisions clearly human-owned.

- Audit outputs for bias signals, unsupported assumptions, and overconfident language around fit.

- Ask interviewers to rate the usefulness of generated kits so the system improves with real recruiting feedback.

Example prompts to start with

A good starting prompt set should be narrow, repetitive, and easy to judge. The goal is not creative novelty. The goal is a repeatable operating motion where the assistant produces something the human can accept, correct, or reject quickly. The sample prompts below work best when paired with your own team-specific instructions, naming conventions, and output format.

- "Summarize this CV for role Y"

- "Generate 10 technical questions + rubric"

- "Draft interview feedback template"

How to measure success

Success for this use case should be measured in operating outcomes, not novelty. If the assistant is helpful, cycle time should drop, the quality of handoffs should improve, and humans should spend less time on clerical reconstruction of context. If those outcomes do not move, the workflow probably is not integrated deeply enough yet or it is automating the wrong step.

This is also where many teams discover whether the workflow is actually sticky. A strong OpenClaw use case keeps getting used because it becomes part of the team’s routine cadence. A weak one gets demoed once and forgotten. The metrics below are meant to catch that difference early.

It is worth reviewing these metrics with examples, not just numbers. Look at one week where the assistant clearly helped and one week where it clearly created rework. That comparison usually exposes whether the underlying issue is prompt quality, missing tool access, weak review discipline, or simply a bad workflow choice. Teams that keep tuning from real examples tend to compound value; teams that only watch dashboards often miss the practical reasons adoption rises or stalls.

- Time from application receipt to recruiter screen decision

- Interviewer preparedness score for generated kits

- Consistency of scorecard completion across interviewers

- Candidate-response SLA and candidate experience feedback

What a mature setup looks like

A mature candidate screening + interview kits workflow does not live as an isolated demo prompt. It becomes part of the team’s normal weekly rhythm. There is a named owner, a clear destination for outputs, a review habit for bad suggestions, and a stable connection to the systems that hold the source data. Once that happens, the assistant stops feeling like an experiment and starts feeling like operational infrastructure. That transition is usually when teams notice the real gain: not just faster task completion, but less managerial drag around reminding, summarizing, and chasing the same work every week.

This is also where managed hosting changes the economics. If the assistant needs to be available on schedule, hold credentials securely, and run the same workflow repeatedly, the team benefits from an environment that is already set up for continuity. OpenClaw works best when the workflow is specific, the boundaries are explicit, and the outputs land where the team already works. In that setting, the assistant is not replacing the profession. It is removing the repetitive coordination tax that keeps the profession from spending enough time on its highest-value judgment.

Guardrails and common mistakes

The main design principle is bounded autonomy. Let the assistant gather, summarize, compare, and draft aggressively. Keep final authority with the human where money, security, compliance, customer commitments, or irreversible operational changes are involved. That split is not a compromise; it is usually the most efficient design. Humans should review only the parts where review creates real value.

Most failures in agent rollouts come from one of two extremes: either the team keeps the assistant so constrained that it saves no time, or it removes safeguards too early and loses trust after one bad output. The practical middle path is to give the assistant a lot of preparation work, visible logs, and explicit escalation boundaries. That makes the system useful without making it reckless.

- Using the assistant to make hidden ranking decisions without a documented rubric

- Allowing it to infer protected-category information or cultural fit from weak signals

- Generating generic interview kits that ignore the actual role

- Treating speed as the only success metric in a process where fairness matters too

Suggested OpenClaw tools

This workflow usually combines the following tool surfaces inside one managed thread: message.

Sources and further reading

- LinkedIn Report: How AI Will Redefine Recruiting in 2025 LinkedIn says recruiters using generative AI report meaningful workload reduction and are shifting time toward more strategic work.

- The Future of Recruiting 2025 | LinkedIn LinkedIn covers skills-based hiring, quality-of-hire measurement, and the need for human judgment around candidate relationships.